Building a Smarter Ad Platform: A Multi-Agent Architecture Guide

Introduction

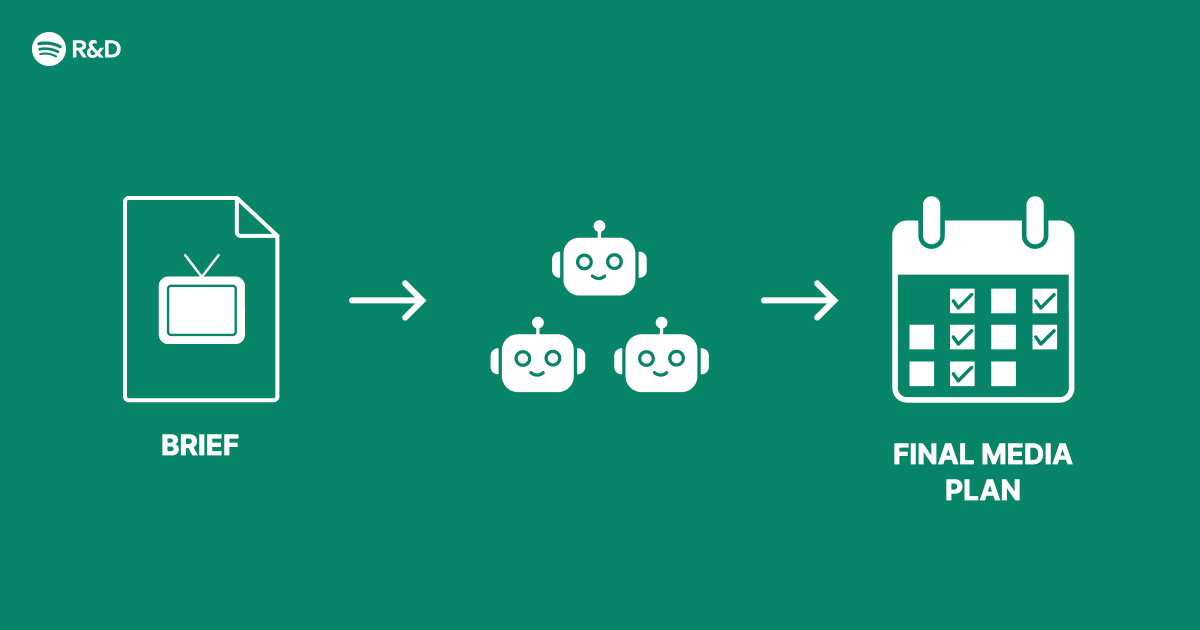

Advertising at scale demands intelligent decision-making that adapts in real time. Instead of shipping a single AI feature, many engineering teams face the challenge of restructuring their ad system to handle complex, dynamic requirements. This guide walks you through building a multi-agent architecture for smarter advertising—an approach that distributes decision-making across specialized agents to improve relevance, efficiency, and responsiveness. The following steps reflect real-world practices used by platforms like Spotify to overcome structural limitations.

What You Need

Prerequisites & Materials

- Data Pipeline: Real-time streams of user behavior, ad inventory, and performance metrics (e.g., click-through rates, conversions).

- Machine Learning Platform: Tools for training and deploying models (e.g., TensorFlow, PyTorch, or a custom ML pipeline).

- Orchestration Framework: A system like Apache Airflow, Kubernetes, or a custom scheduler to manage agent communication.

- Scalable Compute Resources: Cloud infrastructure (AWS, GCP, Azure) with GPU/TPU availability for inference.

- Team Expertise: Engineers with backgrounds in reinforcement learning, distributed systems, and ad-tech.

- Baseline Ad System: An existing monolith or rule-based engine to compare against.

Step-by-Step How-To Guide

Step 1: Decompose the Advertising Problem into Specialized Tasks

Identify the primary decisions your ad system makes—such as user profiling, ad selection, bid optimization, budget pacing, and fraud detection. Instead of one monolithic model, assign each decision to a specialized agent. For example:

- User Intent Agent: Analyzes real-time signals to determine user interests.

- Ad Relevance Agent: Matches user intent with available ad creatives.

- Bidding Agent: Adjusts bids to maximize ROI while staying within budget.

- Budget Pacing Agent: Allocates daily spend to avoid early exhaustion.

This decomposition mirrors the multi-agent architecture used by Spotify to fix structural inefficiencies in their ad platform.

Step 2: Design the Inter-Agent Communication Protocol

Agents must share information without overwhelming the system. Define a structured message format (e.g., JSON or Protocol Buffers) and a central message broker (like Kafka or RabbitMQ). Each agent subscribes to relevant topics and publishes results. For instance, the User Intent Agent outputs a feature vector; the Ad Relevance Agent consumes it and returns a ranked list. This loose coupling allows independent scaling and updates.

Step 3: Implement Reinforcement Learning for Each Agent

Train each agent using reinforcement learning (RL) with a shared global objective (e.g., total ad revenue or user engagement). Use a centralized critic (or value network) to evaluate joint actions while allowing each agent to have its own policy network. This hybrid approach—common in multi-agent RL—enables agents to learn cooperative behaviours. Start with a simple Q-learning setup, then move to actor-critic methods (e.g., MADDPG) for continuous actions.

Step 4: Build an Orchestration Layer for Agent Coordination

The orchestration layer synchronizes agent executions and resolves conflicts. For example, the Bidding Agent might suggest a high bid, but the Budget Pacing Agent may cap it to conserve spend. Use a coordinator agent or a fixed priority ruleset. Spotify’s architecture likely uses a lightweight scheduler to run agents in a defined order per ad request: first user profiling, then ad selection, then bidding, then budget check. Ensure low latency—typically under 50 milliseconds per request.

Step 5: Train and Test with Simulated Ad Auctions

Before deploying online, create a simulation environment that mimics real ad auctions using historical data. Train agents in this sandbox, monitoring key metrics like click-through rate (CTR), conversion rate, and ad revenue. Use A/B testing to compare your multi-agent system against the baseline. Fine-tune hyperparameters (learning rate, discount factor) and adjust reward functions to avoid suboptimal local optima.

Step 6: Deploy Incrementally with Shadow Mode

Run your multi-agent system in shadow mode alongside the existing system. The agents log their decisions without affecting live traffic. This validates the architecture and catches edge cases (e.g., agent deadlock or stale data). Gradually ramp up traffic to the new system—start with 1%, then 10%, 50%, and finally 100%. Monitor for regressions in ad performance and system latency.

Step 7: Implement Continuous Learning and Feedback Loops

Agents must adapt to changing user behavior and advertiser demand. Set up a feedback loop where the system periodically retrains agents using fresh data. For example, once a day, the User Intent Agent updates its model with the latest click logs. Use online learning for agents that need near-real-time adaptation (e.g., Bidding Agent). Spotify’s architecture likely includes automated pipelines that trigger retraining when performance drops below a threshold.

Step 8: Monitor and Debug Agent Interactions

Add extensive logging and visualization tools (e.g., dashboards in Grafana) to track each agent’s decisions and the overall system health. Look for anomalies like an agent dominating decision-making or conflicting recommendations. Implement explanation modules that output why a particular ad was shown—useful for both internal debugging and advertiser transparency.

Tips for Success

- Start simple: Begin with two or three agents (e.g., user profiling and ad selection) before adding more.

- Invest in simulation: A realistic simulator saves months of failed online experiments.

- Keep human oversight: Use rule-based overrides for critical decisions (e.g., brand safety rules).

- Plan for latency: Multi-agent communication can introduce delays; optimize with caching and parallel execution where possible.

- Document agent behaviors: Each agent’s objective and constraints should be clearly defined to avoid conflicts.

- Iterate based on business metrics: Focus on revenue, user experience, and advertiser satisfaction rather than technical complexity.

- Consider ethical implications: Ensure the system avoids bias and respects user privacy—regular audits are essential.

By following these steps, you can build a multi-agent architecture that transforms advertising from a rigid, monolithic system into a dynamic, intelligent ecosystem. This approach, inspired by Spotify’s engineering journey, fixes structural bottlenecks and unlocks smarter ad delivery at scale.